OM System OM-1 & OM-1 (II)

- Thread starter cacau

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Εγώ δοκίμασα με μόνο σημείο και ήταν άμεσα εμφανές ότι ο 75αρης είχε θέμα μόνο σε caf και μόνο πάνω από συγκεκριμένη απόσταση κάτι πάνω από 10 και βάλε μέτρα . Δεν έκανα άλλες δοκιμές με πολλά σημεία , δεν νομίζω να αλλάζει κάτι αλλά θα το δω με την πρώτη ευκαιρία.

Καλορίζικη ευχομαι

Καλορίζικη ευχομαι

Αναφέρει καποια θετικά και καποια αρνητικα σε μια ζυγισμένη παρουσίαση της μηχανης αποκλειστικα για χρηση της σε BIF Photography

Περίεργο παντως ῀που η Αγγλια ειχε τόσα προβλήματα διάθεσης σε αξεσουαρ, εδω και μπαταριες ήρθαν και γκριπ μετα απο το πρωτο τρίμηνο και αρκετες μηχανες

Περίεργο παντως ῀που η Αγγλια ειχε τόσα προβλήματα διάθεσης σε αξεσουαρ, εδω και μπαταριες ήρθαν και γκριπ μετα απο το πρωτο τρίμηνο και αρκετες μηχανες

Δοκιμάστηκε και σε δευτερη ΟΜ1 ο 75αρης με το ίδιο αποτέλεσμα. Θα το αναφέρω στην εταιρία μαζί με ένα ένα δεύτερο bug που ανακάλυψα και αφορά το CAF TR .Εγώ δοκίμασα με μόνο σημείο και ήταν άμεσα εμφανές ότι ο 75αρης είχε θέμα μόνο σε caf και μόνο πάνω από συγκεκριμένη απόσταση κάτι πάνω από 10 και βάλε μέτρα . Δεν έκανα άλλες δοκιμές με πολλά σημεία , δεν νομίζω να αλλάζει κάτι αλλά θα το δω με την πρώτη ευκαιρία.

…..

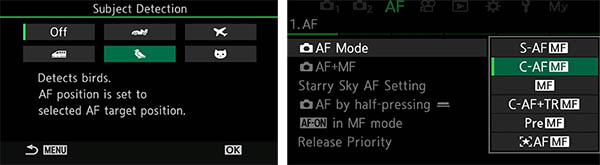

Αν έχει κανεις ανοιχτή την ρύθμιση στο μενού του AF (δεύτερη σελίδα) να φαίνονται τα πολλαπλά σημεία πτυσσόμενα εστίασης (AF area pointer = ΟΝ2) εξαφανίζεται το σύνηθες κουτί που σε όλες τις Olympus εμφανιζόταν στο CAF TR και για πρώτη φορά σε μηχανή Olympus εμφανίζονται τα πολλαπλά πτυσσόμενα σημεία εστίασης όπως και στο CAF .

Το θέμα είναι όμως οτι ενω στο CAF αυτά περιορίζονται μέσα στο μεγεθος του περιγράμματος του κουτιού της εστίασης στο CAF TR όχι μόνο δεν συμβαίνει αυτό αλλά αυτά βγαίνουν έξω από το περίγραμμα και πάνε όπου ναναι χωρίς να ακολουθούν το θέμα.

Αν μπει η ρύθμιση στο ΟΝ1 εμφανίζεται το κλασσικό κουμπί του CAF TR και ακολουθεί κανονικά το θέμα αλλά χάνεις τα πολύ χρήσιμα πτυσσόμενα σημεία εστίασης στο CAF.

Μεχρι να διορθωθεί (αν διορθωθεί) η μονη λύση είναι σε δυο ξεχωρες μνήμες με αποθήκευση στην μια το CAF με ρύθμιση ΟΝ2 και μια στο CAF-TR με ρύθμιση ΟΝ1

Τμημα απο την συνεντευξη στελεχών της ΟΜDS στο IR που αφορά την εστιαση της ΟΜ1

What’s the story with AI Detection AF (AIAF), Tracking AF (TR-AF), Continuous AF, Target AF, etc?

There's been a lot of confusion about how the OM-1's AI-based subject detection feature works with autofocus tracking. It turns out that the tracking done in normal C-AF mode is a separate system from "Target AF". See the text below for an explanation of the differences.

RDE: Finally, a rather detailed question about autofocus operation: What’s up with AF tracking and continuous AF mode, vs using Target AF in continuous AF mode. It seems that the combination of AF tracking and continuous AF doesn’t work well, to the point that you advise against it. Could you explain a bit about how the various modes work, and what it is about continuous AF mode that interferes with AF tracking?

OMDS: We recommend using Target AF in C-AF when shooting with AI Detection AF. Movements of the subject detected are predicted, allowing AF with high level of tracking performance to be achieved.

OMDS: When tracking is used, tracking the primary subject with the integration of data such as color and total screen vector detection other than subject detection is given priority, so subject detection is handled as part of the information to be tracked, and thus other detection results may be given higher priority than detection of the subject alone.

When tracking, if the subject is lost, AF stops, and is restarted when the subject is detected again, or the button is half-pushed once more. When operating with subject detection alone, operation switches to AF operation in zone settings when a subject is not detected.

OMDS: If you try to shoot the subject, for example a cat or a bird, we recommend Target AF and CAF -- not tracking AF -- with AI subject detection. On the other hand, if you tried some other subject [not an AI subject, just a moving object], we recommend C-AF + TR-AF. Because the AF process is different between C-AF and Tracking AF, so if you use AI Detection AF or AIAF, it’s working only with the information from AI detection, but if you use Tracking AF, the camera AF works not only detection AF, but other information, for example, color information or movement direction and so on. Sometimes, only the AI detection process is better than several AF processes with several pieces of information. This is the reason if you turn on the AI Detection AF or AIAF, we recommend C-AF, but if you tried to shoot a moving subject and its movement is unpredictable, we recommend Tracking AF.

RDE: So I think I understand. The AI detection, the neural network is looking at the image, and all of the colors, the shapes, where the eyes are, that sort of thing. But it’s not relying on the PDAF information to find the subject, it’s just looking at the picture like a human would. On the other hand, when you’re Tracking AF, it’s looking at distance over time and how it’s moving, but not at what the information is like. Although you said that Tracking AF also uses color and shape. So… let me try to boil that down.

RDE: So AIAF is really looking to find the subject just based on what the image looks like to it. And once it identifies the subject, then it will use PDAF just in that area (I think) to focus. Whereas tracking AF, it’s looking for general moving shapes, it’s a blob of red and it’s moving based on distance information and so on. So really they’re just two different things, and trying to combine them doesn’t work well. [Actually, they don’t combine at all, they’re two entirely different systems.]

My summary of how AIAF and Tracking AF work…

There was a lot of back and forth in the above, so I've made this summary a separate subhead here. This is my take on the key points about how AIAF and Tracking AF work, and why you can't combine the two:- AIAF and Tracking AF are two fundamentally different and separate systems.

- AIAF looks at the scene in front of the camera the way a human would, identifying subjects by their appearance. Once a subject has been identified, the camera uses the PDAF pixels in that specific area to set focus.

- Tracking AF is the conventional AF that we’ve long been familiar with: It uses a combination of distance (from the PDAF pixels), color and shape to identify the subject (or to follow one that you’ve told it to via the user interface), then uses its movement over time to predict its likely future position. The color and shape help it avoid being confused by other objects in the scene as the subject moves around, but there isn’t the sort of AI-based “intelligence” to recognize an object as a specific type of subject.

- If you’re doing continuous shooting with AIAF, the camera is basically re-identifying the subject in each frame and then focusing on it. It doesn’t make any predictions about the subject's future position based on past behavior.

- AIAF’s subject tracking frame by frame is like:

- “Ah, there’s a bird, focus, click

- (next frame) “Ah, there’s a bird. Focus, click”

- etc, etc...

- Tracking AF is like:

- Ok, the human told me to focus on this thing here. It’s a pink, roundish blob about so big, and it’s this far away from me right now. Focus, click.

- Ok, that blob is a bit more to the right now and 2 feet closer to me; Focus, click.”

- All right, between the previous two frames, the blob moved closer to me by two feet and a bit to the right, so I’m expecting it to show up a bit more to the right and another 2 feet closer to me.

- Shift focus by 2 feet. (This can happen ahead of time, before it's time to grab the next frame)

- Look at the scene. Ah, sure enough, there’s that blob right where I expected it to be, but it’s only about 1.8 feet closer to me this time. I’ll make a note of that for next time...

- Focus, click.”

- and so on…

Personally, I suspect the integration of distance information into AI-based AF algorithms is something that the R&D departments of multiple camera companies are probably looking at right now. As incredibly capable as modern AF systems are, this is still the area where there's the most room for future improvement. That said though, the speed, accuracy and intelligence of current AF systems have reached levels film-era photographers could only have dreamt of.

Πως δουλεύει η ΟΜ1 με μερικούς από τους καλύτερους φακούς που σχεδιάστηκαν ποτέ από την Olympus?

Try OM System OM-1 camera with Four Thirds lenses

Try OM System OM-1 camera with Four Thirds lenses

Επιτέλους το έφτιαξαν , δοκίμασα πριν λίγο τον 75αρη και εστιαζει σε όλες τις αποστάσεις με CAF

θέλω να δω αν θα υπάρξει και βελτίωση στην απόδοση του EVF σε CAF παρακολούθηση θέματος σε χαμηλό φωτισμό με το δάχτυλο στο κλείστρο πατημένο μέχρι τη μέση, το καλοκαιρι σε τέτοιες καταστάσεις που τη δοκίμασα έπεφτε ο ρυθμός ανανέωσης του EVF και το γύρναγα σε SAF. Από ένα όριο φωτισμού και κάτω αρχίζει να σκαλώνει (shimmering) και αν κατέβει κιαλλο η απόδοση πέφτει στα τάρταρα.

Βεβαια στην S1R η μηχανή από μόνη της όταν πέσει σε πολύ χμηλο φωτισμό απενεργοποΙΙΙ το CAF και γυρίζει τη μηχανή σε SAF . Όπως κι ναναι θα πρέπει να υπάρχει μια σαφήνεια στον χρήστη αν μπορεί ή όχι να κανεις χρήση του CAF σε χαμηλό φωτισμό.

Με το LCD ΕVF των ΕΜ1.2/1.3 δεν υπήρχαν τέτοια θέματα , μπορεί χρωματικά το σκοπευτρο όταν έπεφτε το φως να έχανε χρωμα και να έβγαζε θόρυβο αλλά είχε καλύτερη ταχύτητα , τώρα στην ΟΜ1 ίσως λόγω τεχνολογίας Oled και μεγαλύτερης ανάλυσης ο επεξεργαστής να μην μπορεί να διαχειριστεί την ταχύτητα του EVF σε χαμηλό φωτισμό ή να θέλει κάποιο ρεγουλαρισμα για να γίνει ομαλή η παρακολούθηση ενός κινούμενου θέματος σε χαμηλό φωτισμό.

Θα κάνω δοκιμή με το νέο firmware και σε αυτό και θα αναφέρω.

θέλω να δω αν θα υπάρξει και βελτίωση στην απόδοση του EVF σε CAF παρακολούθηση θέματος σε χαμηλό φωτισμό με το δάχτυλο στο κλείστρο πατημένο μέχρι τη μέση, το καλοκαιρι σε τέτοιες καταστάσεις που τη δοκίμασα έπεφτε ο ρυθμός ανανέωσης του EVF και το γύρναγα σε SAF. Από ένα όριο φωτισμού και κάτω αρχίζει να σκαλώνει (shimmering) και αν κατέβει κιαλλο η απόδοση πέφτει στα τάρταρα.

Βεβαια στην S1R η μηχανή από μόνη της όταν πέσει σε πολύ χμηλο φωτισμό απενεργοποΙΙΙ το CAF και γυρίζει τη μηχανή σε SAF . Όπως κι ναναι θα πρέπει να υπάρχει μια σαφήνεια στον χρήστη αν μπορεί ή όχι να κανεις χρήση του CAF σε χαμηλό φωτισμό.

Με το LCD ΕVF των ΕΜ1.2/1.3 δεν υπήρχαν τέτοια θέματα , μπορεί χρωματικά το σκοπευτρο όταν έπεφτε το φως να έχανε χρωμα και να έβγαζε θόρυβο αλλά είχε καλύτερη ταχύτητα , τώρα στην ΟΜ1 ίσως λόγω τεχνολογίας Oled και μεγαλύτερης ανάλυσης ο επεξεργαστής να μην μπορεί να διαχειριστεί την ταχύτητα του EVF σε χαμηλό φωτισμό ή να θέλει κάποιο ρεγουλαρισμα για να γίνει ομαλή η παρακολούθηση ενός κινούμενου θέματος σε χαμηλό φωτισμό.

Θα κάνω δοκιμή με το νέο firmware και σε αυτό και θα αναφέρω.

Σπύρο εγώ το έκανα με wifi με το OiShare , απλά αυτή την φορά σε αντίθεση με όλες τις άλλες που είχα κανει update δεν έκανε αποθήκευση στην αρχή στα settings και στο τέλος αφού πέρασε το firmware δεν προσπάθησε να φορτώσει πάλι τα settings

όταν δηλ. βγήκε το ΟΚ μετά το φόρτωμα του firmware και έκανα reboot την μηχανή διαπίστωσα οτι δεν είχαν χαθεί οι ρυθμίσεις μου αλλά ούτε και η ώρα που συνήθως ήθελε ξανά φτιάξιμο.

όταν δηλ. βγήκε το ΟΚ μετά το φόρτωμα του firmware και έκανα reboot την μηχανή διαπίστωσα οτι δεν είχαν χαθεί οι ρυθμίσεις μου αλλά ούτε και η ώρα που συνήθως ήθελε ξανά φτιάξιμο.

Σπύρο αν δεν τα κατάφερες με το OW υπάρχει και η μέθοδος απευθείας μεσω της κάρτας αν εχεις το αρχείο κατεβασμενοΘα το δοκιμάσω κι εγώ Κώστα.

Δυστυχώς με το OM Workspace κάτι συμβαίνει σήμερα.

Στη συζήτηση εδώ δίνουν το αρχείο και αναφέρουν και τον τρόπο αναβάθμισης μέσω κάρτας μνήμης.

CorfuS

Established Member

- 17 November 2017

- 249

Πολύ καλό ειναι να έχουμε feedback όπως το δικό σου σε BIF μετά το firmware , να υποθέσω με τον LEICA 100-400 ?

Μπορεί να μην έδωσαν με το firmware νέα features αλλά διόρθωσαν μεμιάς σχεδόν ολα τα γνωστά bug που είχε παρουσιάσει και πλέον η μηχανή είναι ένα αξιόπιστο εργαλείο σε όλες τις συνθήκες. Ο κανόνας εδώ και χρόνια είναι οτι όλα τα νέα μοντέλα θέλουν 1-2 firmware για να στρώσουν και να διορθωθούν τα μικροπροβλήματα. Αυτό συνήθως γίνεται το πρώτο εξάμηνο / χρόνο και μετά είναι η καλύτερη εποχή για την αγορά τους γιατί σχεδόν πάντα έχουν ισσοροπησει και σε τιμή.

Οι διαπιστωμένες διορθώσεις με το νέο firmware (μετά από δοκιμες χρηστών):

1.- το πρόβλημα εστίασης σε CAF πάνω από 8-10 μέτρα με την σειρά f1.8 φακών (17/1.8,25/1.8,45/1.8,75/1.8)

2.- το θόρυβο που έκαναν μερικοί φακοί όταν εστίαζαν όπως ο 35/3.5 macro (δεν το είχα παρατηρήσει)

3.- το πρόβλημα που έδινε focus confirmation με SAF στα βραδυνα σε χαμηλό φως χωρίς να έχει εστιασει (εμένα σπάνια το έκανε)

Το μόνο που δεν είδα να διορθώνουν είναι στο CAF TR όταν έχει κανεις ανοίξει στο menu τη ρύθμιση να δείχνει τα πτυσσόμενα focus points , εκεί μπερδεύεται και κάνει του κεφαλιού της εστιάζοντας έξω από το σημείο που κλειδώνεις αρχικά την εστίαση και δεν ακολουθεί το θέμα, για να το κάνει όπως η ΕΜ1.3 και οι προηγουμενες πρέπει να κλείσεις τα πτυσσόμενα σημεία εστίασης.

Δεν έχω δοκιμασει να δω αν βελτιώθηκε σε χαμηλο φωτισμό το EVF όταν κανεις panning , κάποιος έγραψε οτι έχει γίνει πιο ομαλή η κίνηση χωρις να σκαλώνει το EVF και δεν πέφτει πολύ το frame rate , αλλά πιστευω οτι αυτό εξαρτάται και από τον φακό που φοράει και το τελικό φως που φθάνει στον αισθητήρα.

Μπορεί να μην έδωσαν με το firmware νέα features αλλά διόρθωσαν μεμιάς σχεδόν ολα τα γνωστά bug που είχε παρουσιάσει και πλέον η μηχανή είναι ένα αξιόπιστο εργαλείο σε όλες τις συνθήκες. Ο κανόνας εδώ και χρόνια είναι οτι όλα τα νέα μοντέλα θέλουν 1-2 firmware για να στρώσουν και να διορθωθούν τα μικροπροβλήματα. Αυτό συνήθως γίνεται το πρώτο εξάμηνο / χρόνο και μετά είναι η καλύτερη εποχή για την αγορά τους γιατί σχεδόν πάντα έχουν ισσοροπησει και σε τιμή.

Οι διαπιστωμένες διορθώσεις με το νέο firmware (μετά από δοκιμες χρηστών):

1.- το πρόβλημα εστίασης σε CAF πάνω από 8-10 μέτρα με την σειρά f1.8 φακών (17/1.8,25/1.8,45/1.8,75/1.8)

2.- το θόρυβο που έκαναν μερικοί φακοί όταν εστίαζαν όπως ο 35/3.5 macro (δεν το είχα παρατηρήσει)

3.- το πρόβλημα που έδινε focus confirmation με SAF στα βραδυνα σε χαμηλό φως χωρίς να έχει εστιασει (εμένα σπάνια το έκανε)

Το μόνο που δεν είδα να διορθώνουν είναι στο CAF TR όταν έχει κανεις ανοίξει στο menu τη ρύθμιση να δείχνει τα πτυσσόμενα focus points , εκεί μπερδεύεται και κάνει του κεφαλιού της εστιάζοντας έξω από το σημείο που κλειδώνεις αρχικά την εστίαση και δεν ακολουθεί το θέμα, για να το κάνει όπως η ΕΜ1.3 και οι προηγουμενες πρέπει να κλείσεις τα πτυσσόμενα σημεία εστίασης.

Δεν έχω δοκιμασει να δω αν βελτιώθηκε σε χαμηλο φωτισμό το EVF όταν κανεις panning , κάποιος έγραψε οτι έχει γίνει πιο ομαλή η κίνηση χωρις να σκαλώνει το EVF και δεν πέφτει πολύ το frame rate , αλλά πιστευω οτι αυτό εξαρτάται και από τον φακό που φοράει και το τελικό φως που φθάνει στον αισθητήρα.

Last edited:

CorfuS

Established Member

- 17 November 2017

- 249

Ναι , με τον Leica 100-400 είναι.Πολύ καλό ειναι να έχουμε feedback όπως το δικό σου σε BIF μετά το firmware , να υποθέσω με τον LEICA 100-400 ?

Τα στοχευμενα και λιτά review από εμπειρους χρήστες μετά από χιλιάδες φωτό με την μηχανή σε πραγματικές καταστάσεις (όχι charts), εκεί που η μηχανή χρειάζεται να δείξει τι μπορεί να καταφέρει είναι πιστευω και τα πιο χρήσιμα για να μεταφερει κανεις τα πλεονεκτήματα ή τα προβλήματα που θα συναντήσει από τον εξοπλισμό και αν υπάρχουν εναλλακτικές λύσεις να τα ξεπερασουν. Σε ταξίδι σαφάρι, δίπλα έχει η γυναίκα του με SONY A7R4 και φακό 200-600mm

My OM Systems OM1 Review

επειδή έχω πάντα ενεργό το ΟΝ2 σε CAF και δεν θέλω να φτιάξω άλλη μνήμη για CAF TR με ρύθμιση ΟΝ1 , δεν μπορώ να βγάλω άκρη τι κάνει και αν ακολουθεί θέμα στο CAF-Tr με ενεργό το ΟΝ2 γιατί τα πτυσσόμενα κουτάκια πάνε όπου ναναι και δεν ακολουθούν το θέμα που έχω πρώτο στοχεύσει,

οποτε έχω καταργήσει το CAFTR τελειως από τις επιλογές μου (παρότι είναι χρησιμο για αυτόματο recompose στη σκηνή), άλλωστε δεν το προτείνει η ΟΜDS σε συνδιασμό με το ΑΙ αφού στην πράξη είναι δυο λειτουργίες που δεν δουλεύουν συνδιαστικα

συμφωνα με το παραπάνω βίντεο όμως το CAFTR ίσως είναι χρησιμο ως έξτρα επιλογή σε συνδιασμό με το Face/eye Detection όταν το πρόσωπο μετακινειται και στεφεται.

ελπίζω στο μεγάλο firmware update να καταφέρουν να ενσωματωσουν το human detection στο μενού του ΑΙ ώστε να μην είναι ξέχωρο και να θέλει άλλη ενεργοποίηση και όλη η διαδικασία να γίνει πιο απλή και κατανοητή (όπως είναι στις Panasonic αλλά και σε αλλλες εταιρίες)

Επισης είναι πιστευω θεωρητικά απλό στο ΑΙ mode αλλά και στο Face/eye Detection να προσθεσουν την επιλογή να μεταφέρεσαι από τον ένα αναγνώρισιμο στόχο στον άλλο είτε με τα πληκτρα βελάκια , είτε με το trackpad δηλ. χωρίς την χρήση έξτρα προγραμματιζόμενο κουμπιού